David Black, Bastian Ganze, Julian Hettig, Christian Hansen

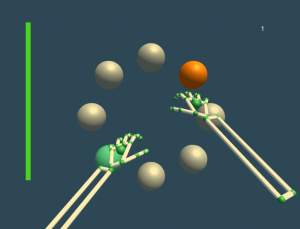

Experimental task: the participant has just selected a sphere (green)

Free-hand gesture recognition technologies allow touchless interaction with a range of applications. However, touchless interaction concepts usually only provide primary, visual feedback on a screen. The lack of secondary tactile feedback, such as that of pressing a key or clicking a mouse, in interaction with free-hand gestures is one reason that such techniques have not been adopted as a standard means of input.

This work explores the use of auditory display to improve free-hand gestures. Gestures using a Leap motion controller were augmented with auditory icons and continuous, model-based sonification. Three concepts were generated and evaluated using a sphere-selection task and a video frame selection task. The user experience of the participants was evaluated using NASA TLX and QUESI questionnaires. Results show that the combination of auditory and visual display outperform both purely auditory and purely visual displays in terms of subjective workload and performance measures.